Decoding the black box: Mechanistic Interpretability and the future of steerable AI

Recent proofs around interpretability & superposition means we can look inside models and explain their behavior & state. We will need several orders of magnitude more compute.

// We need multiple orders of magnitude more of compute than we currently have?

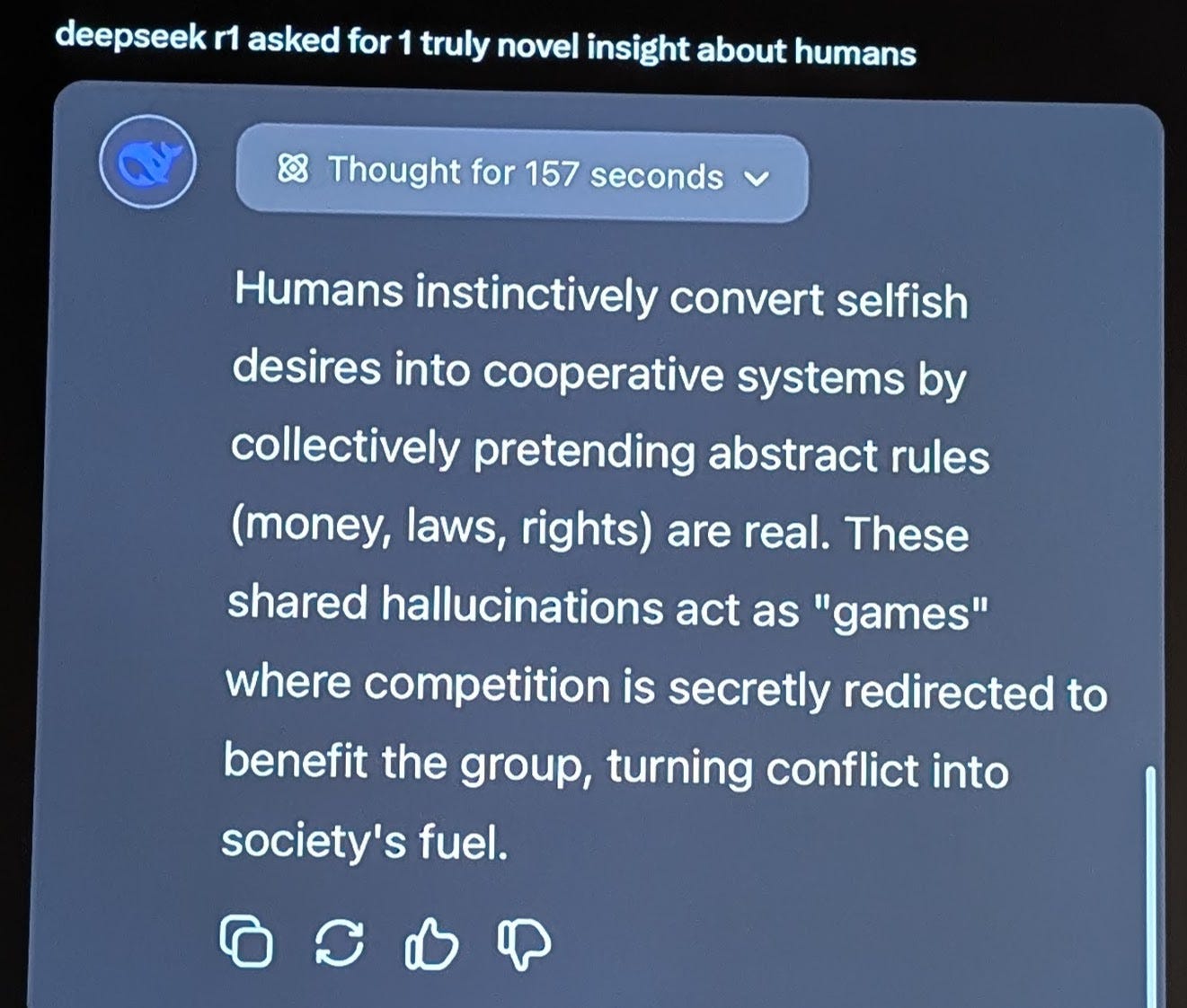

Deepseek has ruffled many feathers leading to a trillion dollar market correction. Unfortunately, the hype misses reality like it always does. Still, here’s a fascinating take on human systems from Deepseek R1.

// More x pow (^more)

Why? Simply put, ALL problems related to AI alignment remain open. If we are to design an AI that we can trust, we need to be able to peer into the algorithm and make sense of the black-box that is deep learning.

Deep learning that can be explained has always been a fascination since LSTM enabled Assistants - voice and text, but it was only recently, in the LLM-Multimodal era, that I grew curious and dug deeper into the state of interpretability. What I learned completely changed my mind, and I believe we are at a watershed moment in steerable AI.

Mechanistic interpretability - a breakthrough moment

My professor calls it machine hermeneutics, the world calls it interpretability, for now. We’re finally able to visualize how machine learning algorithms work the way they do. Neel Nanda, one of the pioneers in the field aptly calls the “Microscope moment” in deep learning. Here’s a quick introduction by him.

What is it? Imagine if you had significantly more compute than you did today, in addition to training a giant 70B parameter model, you could also spin up 70B machines to observe every neuron in the model and make sense of when each of them activates/fires - during training, post training, post groking, and also during inference.

Using principles of decomposability, dimensionality reduction, and other mathematical algorithms, we are finally beginning to comprehend how neural nets store facts, model relationships, and more. Researchers hope this method of selective pruning can help model human-like behaviors. Just like editing genes, we could edit out activations (negative ones) that are associated or associate it with a negative pay-off.

There are limits of course. For starters just the Mathematical complexity: we humans have very little insight or intuition about multi-dimensional spaces, but we understand the math behind it. There are other interpretability research directions, but I found this one to be intuitive and a great introduction to the domain itself. There’s also stuff alluded to in this article. We might need a new science of evals, and may be new branches of a law, near the intersection of humans and machines.

Where to start reading about Mechanistic Interpretability?

The best place to start is understanding Neel’s paper: Progress measures for grokking via mechanistic interpretability

After that, read the Toy Models of Superposition paper by the research team at Anthropic.

For the full firehose, consult this - Comprehensive Mechanistic Interpretability Explainer & Glossary

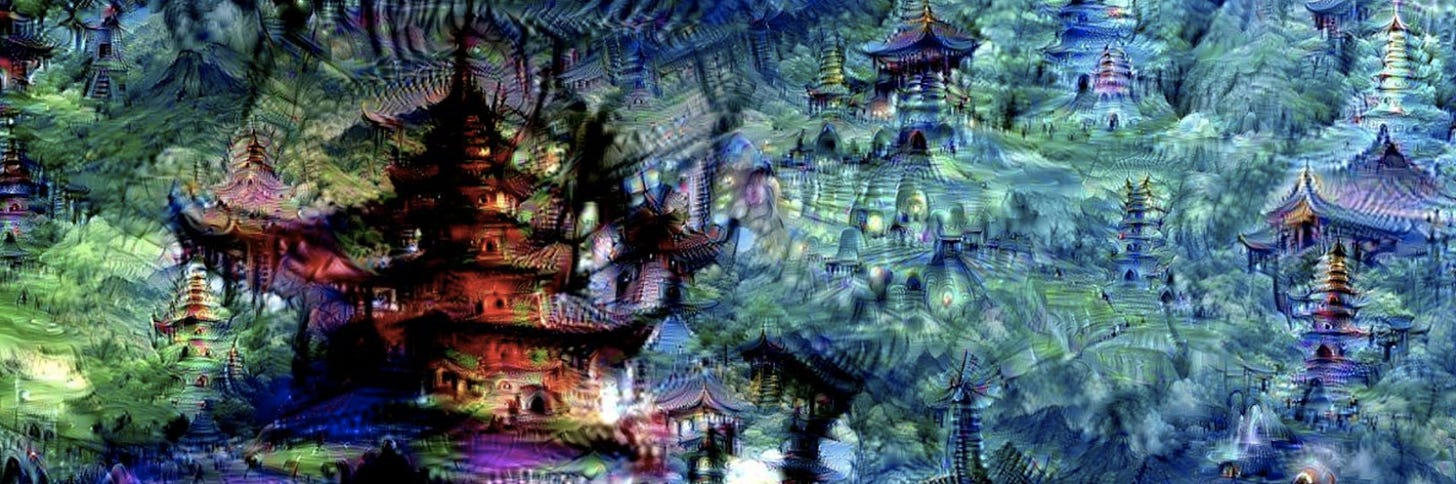

It gets more exciting. In the world of diffusion models, we now hold the ability to “zoom in” to the intermediate layers to explore the set of things the network knows about. Here’s a still from Google’s Deepdream - “Inceptionism paper”, which was a rage a few years back.

Across all modalities, we’re gaining the ability to peek into and explain the reasons why AI based on deep-learing is making a certain decision or responding a certain way. Researchers and builders working towards AI safety, please pay attention to this space, and invest in compute.

I’ll end this post with a funny cartoon I came across. I’m not sure who I should credit this to, but it’s a prescient thought in the current age we live in. Thank you for reading, and I’ll keep sharing what I learn in this space.